ABOUT

PERSONAL DETAILSI am passionate about complexity science

Welcome to my academic profile Available for consulting and collaborating

BIO

MY RESEARCH

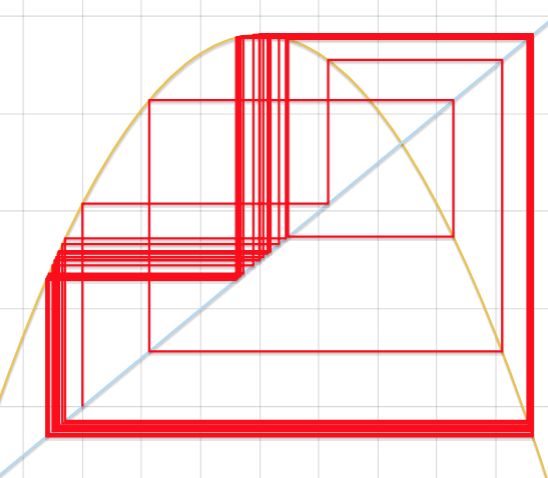

In the study of complex adaptive systems, the available data often falls far short of the demands of the theory. In mathematics, for instance, we often make statements like “assume we have an infinite noise-free time series, then…” But in studying complex systems, we often ask questions like “I have 347 noisy observations that I collected over three years in a jungle. What can we learn from this information?” My research aims to develop rigorous models that bridge the gap between theory and observation---data that may be wildly lacking in the eyes of mathematics, but may still contain valuable information about the system. Said differently: when perfect isn’t possible, how can we adapt mathematics to describe the world around us? In studying complicated, ill-sampled, noisy systems, my work focuses on understanding how much information is present in the data, how to extract it, to understand it, and to use it---but not overuse it.

Specifically, I am working toward developing a parsimonious reconstruction theory for nonlinear dynamical systems. In addition, I aim to leverage information mechanics (e.g., production, storage and transmission) to gain insight into important yet imperfect systems, like the climate, traded financial markets and the human heart. My hope is this combination of new mathematical theory, analysis, and application can eventually shed a little more light on universalities like emergence, regime shifts, and phase transitions.

I received my Ph. D. from the University of Colorado, supervised by Elizabeth Bradley, introducing a new paradigm in delay-coordinate reconstruction theory. Prior to that, I earned an M.S. in Applied Mathematics also from the University of Colorado, constructing dynamical models of computer performance and a dual B.S. in Mathematics and Computer Science from Colorado Mesa University.

HOBBIES

INTERESTS

Duis eu finibus urna. Pellentesque facilisis tellus vel leo accumsan, a tristique est luctus. Morbi quis euismod nulla. Sed eu nibh eros.

Duis eu finibus urna. Pellentesque facilisis tellus vel leo accumsan, a tristique est luctus. Morbi quis euismod nulla. Sed eu nibh eros.

Duis eu finibus urna. Pellentesque facilisis tellus vel leo accumsan, a tristique est luctus. Morbi quis euismod nulla. Sed eu nibh eros.

Duis eu finibus urna. Pellentesque facilisis tellus vel leo accumsan, a tristique est luctus. Morbi quis euismod nulla. Sed eu nibh eros.