THOTH

a python package for the efficient estimation of information-theoretic quantities from empirical dataParticipants: Simon DeDeo, Robert X. D. Hawkins, Sara Klingenstein.

Programming consultant: Quan Minh Bui.

Information is an essential part of the natural and social worlds. From Shannon’s pioneering work in the 1940s, we now know how it can be quantified. But estimating these quantities from limited data is more difficult than it might seem.

THOTH allows for the efficient and consistent estimation of entropy, mutual information, Jensen-Shannon divergence, and other quantities essential to the understanding of uncertainty, prediction and information-processing in real-world systems. It was written as part of the analysis for this paper.

The core is written in ANSI C, and wrapped in Python. THOTH is a factor of twenty times faster than a Python-only implementation. We believe it will prove useful to workers in the biological and social sciences.

Latest Version of THOTH : where to get THOTH.

Documentation : how to use THOTH.

Citing THOTH : THOTH’s methods are due to a number of authors.

THOTH implements both Bayesian (Wolpert & Wolf and NSB) and non-Bayesian estimators on discrete-counts data. For the continuous case, you may be interested in Greg Ver Steeg’s NPEET (Non-Parametric Entropy Estimation Toolbox).

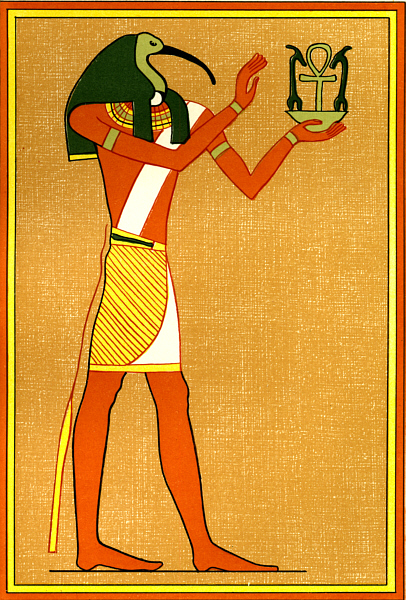

THOTH is named, playfully, after the Ancient Egyptian deity. Thoth was credited with the invention of symbolic representation, and his tasks included judgement, arbitration, and decision-making among both mortals and gods, making him a particularly appropriate namesake for the study of information in both the natural and social worlds.

Development of THOTH is supported in part by the National Science Foundation under Grant No. EF-1137929. Any opinions, findings and conclusions or recommendations expressed by THOTH are those of THOTH and do not necessarily reflect the views of the National Science Foundation (NSF).

Development of THOTH is also supported by the Emergent Institutions project.